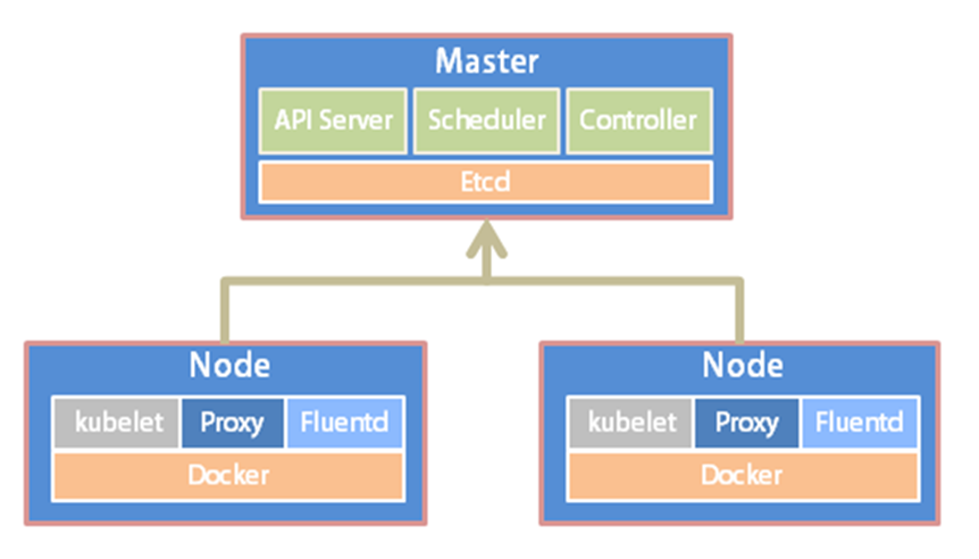

一、Kubernetes简介

Kubernetes(简称K8S)是开源的容器集群管理系统,可以实现容器集群的自动化部署、自动扩缩容、维护等功能。它既是一款容器编排工具,也是全新的基于容器技术的分布式架构领先方案。在Docker技术的基础上,为容器化的应用提供部署运行、资源调度、服务发现和动态伸缩等功能,提高了大规模容器集群管理的便捷性。

K8S集群中有管理节点与工作节点两种类型。管理节点主要负责K8S集群管理,集群中各节点间的信息交互、任务调度,还负责容器、Pod、NameSpaces、PV等生命周期的管理。工作节点主要为容器和Pod提供计算资源,Pod及容器全部运行在工作节点上,工作节点通过kubelet服务与管理节点通信以管理容器的生命周期,并与集群其他节点进行通信。

二、环境部署准备

1、环境架构

|---------------|------------|-----------|------| | IP地址 | 主机名 | Kubelet版本 | 用途 | | 192.168.0.199 | k8s-master | V1.25.2 | 管理节点 | | 192.168.0.198 | k8s-node2 | V1.25.2 | 工作节点 | | 192.168.0.197 | k8s-node1 | V1.25.2 | 工作节点 |

2、配置主机名(以下操作所有节点需要执行)

Master

[root@localhost ~]# hostnamectl set-hostname k8s-master --static

Node1

[root@localhost ~]# hostnamectl set-hostname k8s-node1 --static

Node2

[root@localhost ~]# hostnamectl set-hostname k8s-node2 --static

[root@k8s-master ~]# cat >>/etc/hosts <<EOF

192.168.0.199 k8s-master

192.168.0.198 k8s-node2

192.168.0.197 k8s-node1

EOF

3、关闭防火墙和selinux

[root@k8s-master ~]# systemctl stop firewalld.service

[root@k8s-master ~]# systemctl disable firewalld.service

[root@k8s-master ~]# setenforce 0

[root@k8s-master ~]# sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

4、关闭swap,注释swap分区

[root@k8s-master ~]# swapoff -a

[root@k8s-master ~]# sed -i '/swap/s/^/#/g' /etc/fstab

5、配置内核参数,将桥接的IPv4流量传递到iptables的链

[root@k8s-master ~]# cat >>/etc/modules-load.d/k8s.conf <<EOF

overlay

br_netfilter

EOF

[root@k8s-master ~]# modprobe overlay

[root@k8s-master ~]# modprobe br_netfilter

[root@k8s-master ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

[root@k8s-master ~]# sysctl --system

6、安装Docker

[root@k8s-master ~]# curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

[root@k8s-master ~]# systemctl enable docker

[root@k8s-master ~]# systemctl start docker

7、添加阿里云docker仓库加速器及cgroup驱动

[root@k8s-master ~]# mkdir -p /etc/docker

[root@k8s-master ~]# cat >/etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://fl791z1h.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl restart docker

8、安装cri-docker

参考: https://github.com/Mirantis/cri-dockerd可以从release版本中直接下载rpm包安装比较简单

Docker Engine没有实现CRI,而这是容器运行时在Kubernetes中工作所需要的。为此,必须安装一个额外的服务cri-dockerd。cri-dockerd是一个基于传统的内置Docker引擎支持的项目,它在1.24版本从kubelet中移除。

[root@k8s-master ~]# wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.2.6/cri-dockerd-0.2.6-3.el7.x86_64.rpm

[root@k8s-master ~]# yum -y install cri-dockerd-0.2.6-3.el7.x86_64.rpm

9、配置沙箱(pause)镜像

需要修改cri-docker.service, 指定network-plugin和pod-infra-container-image(否则后续kubeadm init会无法启动容器报错)

[root@k8s-master ~]# sed -i '/ExecStart/s#dockerd#& --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.8#' /usr/lib/systemd/system/cri-docker.service

[root@k8s-master ~]# systemctl start cri-docker && systemctl enable cri-docker

三、安装kubectl、kubelet、kubeadm

1、添加阿里kubernetes源

[root@k8s-master ~]# cat >/etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2、安装kubectl、kubelet、kubeadm

[root@k8s-master ~]# yum -y install kubectl-1.25.2 kubelet-1.25.2 kubeadm-1.25.2

[root@k8s-master ~]# systemctl enable kubelet

四、部署Kubernetes Master

1、初始化K8s集群

[root@k8s-master ~]# kubeadm init --kubernetes-version=1.25.2 \

--apiserver-advertise-address=192.168.0.199 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=172.15.0.0/16 --pod-network-cidr=172.16.0.0/16 \

--cri-socket unix:///var/run/cri-dockerd.sock

注:pod的网段为: 172.16.0.0/16,api server地址为Master本机IP,网段可以自定义,不冲突即可。

这一步很关键,由于kubeadm 默认从官网k8s.grc.io下载所需镜像,国内无法访问,因此需要通过--image-repository指定阿里云镜像仓库地址。

集群初始化成功后返回如下信息:

[init] Using Kubernetes version: v1.25.2

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [172.15.0.1 192.168.0.199]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.0.199 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.0.199 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 15.006652 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: hpg3xk.pgaajueu0r9fpqpl

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.0.199:6443 --token hpg3xk.pgaajueu0r9fpqpl \

--discovery-token-ca-cert-hash sha256:186d7bffdba732c14e342a39c16842bb7388f4b43b8766d97dfaacb6af1974e4

注:记录生成的最后部分内容,此内容需要在其它节点加入Kubernetes集群时执行。

2、根据提示创建kubectl

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

3、查看node节点和pod

[root@k8s-master ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane 7m42s v1.25.2

[root@k8s-master ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-c676cc86f-hz2sz 0/1 Pending 0 7m32s

kube-system coredns-c676cc86f-mlzs6 0/1 Pending 0 7m32s

kube-system etcd-k8s-master 1/1 Running 0 7m45s

kube-system kube-apiserver-k8s-master 1/1 Running 0 7m45s

kube-system kube-controller-manager-k8s-master 1/1 Running 0 7m45s

kube-system kube-proxy-qqrgn 1/1 Running 0 7m32s

kube-system kube-scheduler-k8s-master 1/1 Running 0 7m45s

注:node节点为NotReady,因为corednspod没有启动,缺少网络pod

4、安装Pod网络插件calico(CNI)

[root@k8s-master ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

5、再次查看pod和node

[root@k8s-master ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-59697b644f-9txn4 1/1 Running 0 6m6s

kube-system calico-node-pf6kt 1/1 Running 0 6m6s

kube-system coredns-c676cc86f-hz2sz 1/1 Running 0 14m

kube-system coredns-c676cc86f-mlzs6 1/1 Running 0 14m

kube-system etcd-k8s-master 1/1 Running 0 15m

kube-system kube-apiserver-k8s-master 1/1 Running 0 15m

kube-system kube-controller-manager-k8s-master 1/1 Running 0 15m

kube-system kube-proxy-qqrgn 1/1 Running 0 14m

kube-system kube-scheduler-k8s-master 1/1 Running 0 15m

[root@k8s-master ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane 15m v1.25.2

6、从节点加入Kubernetes集群,注意添加--cri-socket

[root@k8s-node1 ~]# kubeadm join 192.168.0.199:6443 --token hpg3xk.pgaajueu0r9fpqpl \

--discovery-token-ca-cert-hash sha256:186d7bffdba732c14e342a39c16842bb7388f4b43b8766d97dfaacb6af1974e4 \

--cri-socket unix:///var/run/cri-dockerd.sock

[root@k8s-node2 ~]# kubeadm join 192.168.0.199:6443 --token hpg3xk.pgaajueu0r9fpqpl \

--discovery-token-ca-cert-hash sha256:186d7bffdba732c14e342a39c16842bb7388f4b43b8766d97dfaacb6af1974e4 \

--cri-socket unix:///var/run/cri-dockerd.sock

7、再次查看Node

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane 24m v1.25.2

k8s-node1 Ready <none> 3m22s v1.25.2

k8s-node2 Ready <none> 2m18s v1.25.2

8、kubectl命令补全功能

[root@k8s-master kube-monitor]# yum -y install bash-completion

[root@k8s-master kube-monitor]# echo "source <(kubectl completion bash)" >> /etc/profile

[root@k8s-master kube-monitor]# source /etc/profile

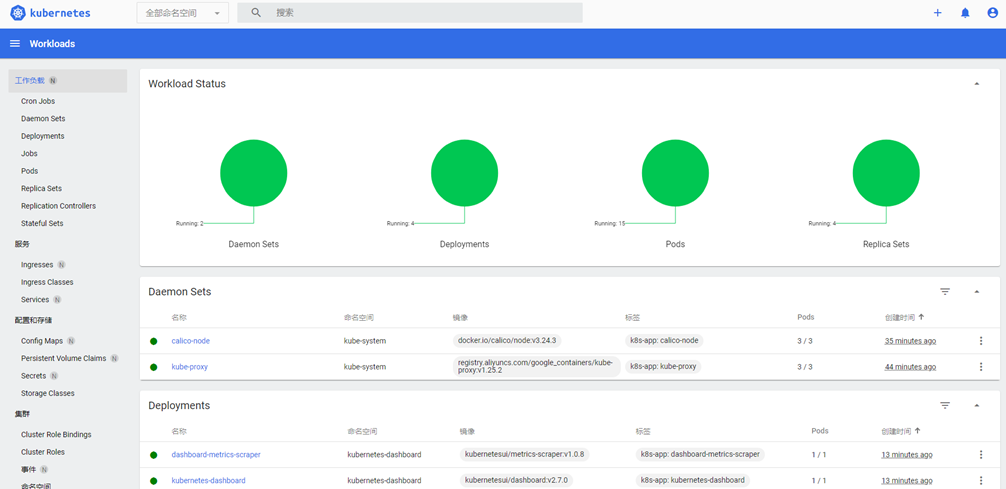

五、安装kubernetes-dashboard

注:官方部署dashboard的服务没使用nodeport,将yaml文件下载到本地,在service里添加nodeport

1、下载配置文件

[root@k8s-master ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

2、修改配置文件

[root@master ~]# vim recommended.yaml

需要修改的内容如下所示

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort # 增加内容

ports:

- port: 443

targetPort: 8443

nodePort: 30000 # 增加内容

selector:

k8s-app: kubernetes-dashboard

[root@k8s-master ~]# kubectl apply -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

3、查看pod和service

[root@k8s-master ~]# kubectl get pod,svc -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

pod/dashboard-metrics-scraper-64bcc67c9c-t7hsl 1/1 Running 0 43s

pod/kubernetes-dashboard-5c8bd6b59-82nvx 1/1 Running 0 43s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/dashboard-metrics-scraper ClusterIP 172.15.108.161 <none> 8000/TCP 43s

service/kubernetes-dashboard NodePort 172.15.174.174 <none> 443:30000/TCP 43s

4、通过页面访问,推荐使用firefox浏览器

浏览器输入https://172.168.0.199:30000/,如下图所示

5、创建用户

[root@k8s-master ~]# vim dashboard-admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin

namespace: kubernetes-dashboard

*** ** * ** ***

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

* kind: ServiceAccount

name: admin

namespace: kubernetes-dashboard

*** ** * ** ***

apiVersion: v1

kind: Secret

metadata:

name: kubernetes-dashboard-admin

namespace: kubernetes-dashboard

annotations:

kubernetes.io/service-account.name: "admin"

type: kubernetes.io/service-account-token

[root@k8s-master ~]# kubectl apply -f dashboard-admin.yaml

serviceaccount/admin created

clusterrolebinding.rbac.authorization.k8s.io/admin created

secret/kubernetes-dashboard-admin created

6、创建Token

[root@k8s-master ~]# kubectl -n kubernetes-dashboard create token admin

eyJhbGciOiJSUzI1NiIsImtpZCI6IjE0aExvNVB0N2lLb1lpZTFvaGQwejM5VjFXSWUtV3dsNnR4b3BIcWRNdVEifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNjY3MDMwOTM1LCJpYXQiOjE2NjcwMjczMzUsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJhZG1pbiIsInVpZCI6IjVkZGViNzkxLWNlMDEtNGE3Mi05ZDg1LTcwYWYzZTFjNWY2YyJ9fSwibmJmIjoxNjY3MDI3MzM1LCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4ifQ.GYmv7T2ba_2x-w7lGzXP8ijw33cFN2Z1ZgJtjFn-0Uss5E-Y3em915zdj8eqvAmTc_AvkDFJgrfK8JW_CsXWv6efy4SNjX4a-Z3jsGZG-1Lg6X4fwhLwctJSyd5znG1O0xtqiLWbfLgwT2g0VybcKQwRpq-IQe4quHl9gKF9nS4wAO7Fd3H_CJOO5tEratHxrb7FKqjWMSfJiSkVHtQ_6gwTqpLIDWe_f8R5rPwP24GUNr7_-oXx05J-uLHnXefXbb8zlc3BnsL4GmV6Xq9J1pTN0FEJWfBNg610OWtecnVPcyhHF8fLLvrPXIr4MHNfqgpR2RcrHhKeTg9FrIVaUQ

7、输入刚才创建的Token登录,如下图所示

注:登录后如果没有namespace可选,并且提示找不到资源,那么就是权限不足问题导致,可通过以下命令授权

[root@k8s-master ~]# kubectl create clusterrolebinding serviceaccount-cluster-admin --clusterrole=cluster-admin --user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

8、Token获取方式

Token=$(kubectl -n kubernetes-dashboard get secret |awk '/kubernetes-dashboard-admin/ {print $1}')

[root@k8s-master ~]# kubectl describe secrets -n kubernetes-dashboard kubernetes-dashboard-admin

至此,kubeadm在CentOS 7.9部署kubernetes1.25.2完毕。

继续阅读

Kubernetes最后更新:2023-10-11

51工具盒子

51工具盒子