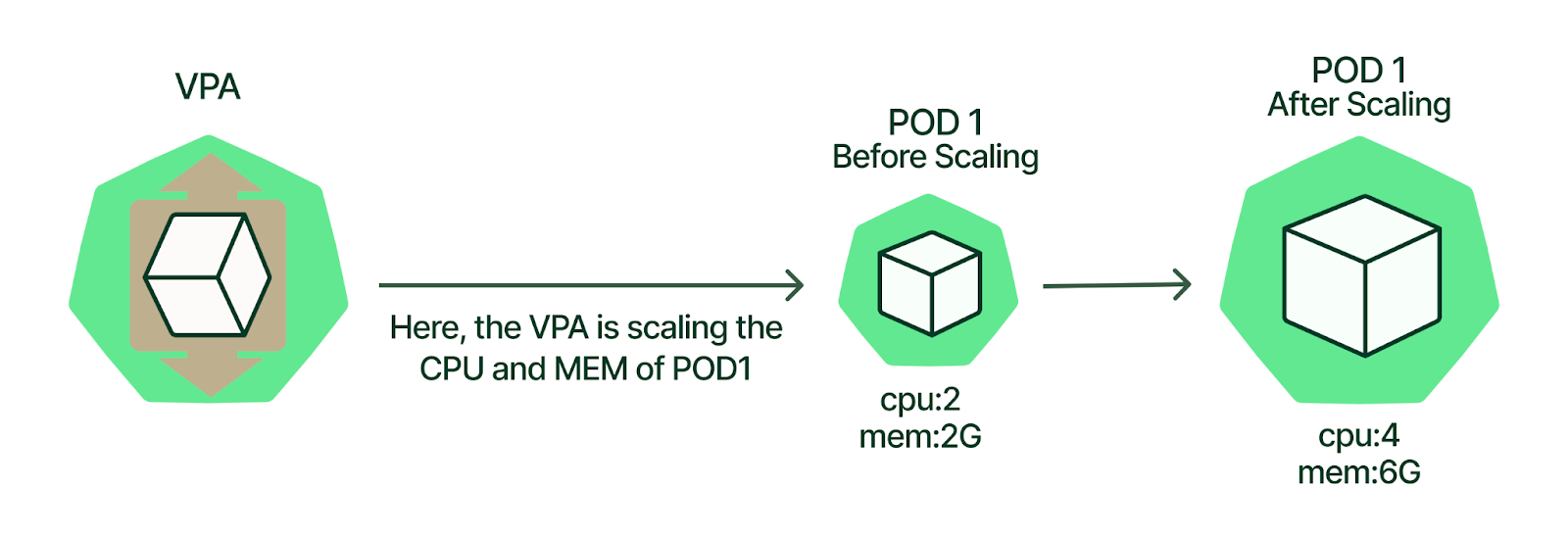

kubernetes支持VPA(垂直)和HPA(水平)两种扩缩容的方式,通俗的来讲,VPA就是堆配置,调整现有的POD资源,而HPA就是增加POD,VPA缩放是需要停止Pod。

VPA介绍

VPA 全称 Vertical Pod Autoscaler 垂直Pod自动扩容,VPA会基于Pod资源使用情况自动设置集群资源占用限制。VPA也会保持最初容器定义中资源的容器。用户无需为其pod中容器设置资源request。

配置VPA后,VPA会根据使用情况自动设置request,从而允许在当前节点进行适当调度,以便为每个Pod提供适当的资源率

- VPA通过将增加和减少现有Pod容器内的CPU和内存来进行扩展,从而垂直扩展容量

VPA HPA弹性伸缩

为了解决业务服务负载时刻存在巨大波动和资源实际使用与预估之间的差距,就有了针对业务本身的扩缩容解决方法。

对于Kubernetes集群来说,弹性伸缩总体分为以下几种:

- Cluster-Autoscale (CA) Node数量自动伸缩,依赖于虚拟机集群

- Vertical Pod Autoscaler (VPA) Pod垂直自动伸缩,如自动调整和计算deployment pod中的limit/request,依赖业务历史负载指标

- Horizontal Pod Autoscaler (HPA) Pod水平自动伸缩,例如scale deployment的replicas

弹性伸缩依赖集群监控数据,例如CPU、内存等

其中VPA和HPA都是从业务负载角度优化

VPA解决资源配额,Pod中CPU、内存评估不标准的问题

HPA解决业务负载过大,需要调整副本的问题。

简单点说明

| 容量调整 | 水平伸缩HPA | 垂直缩放VPA | |------|---------|------------| | 资源增长 | 添加pod | 增加pod容器内资源 | | 资源减少 | 删除pod | 减少pod容器内资源 |

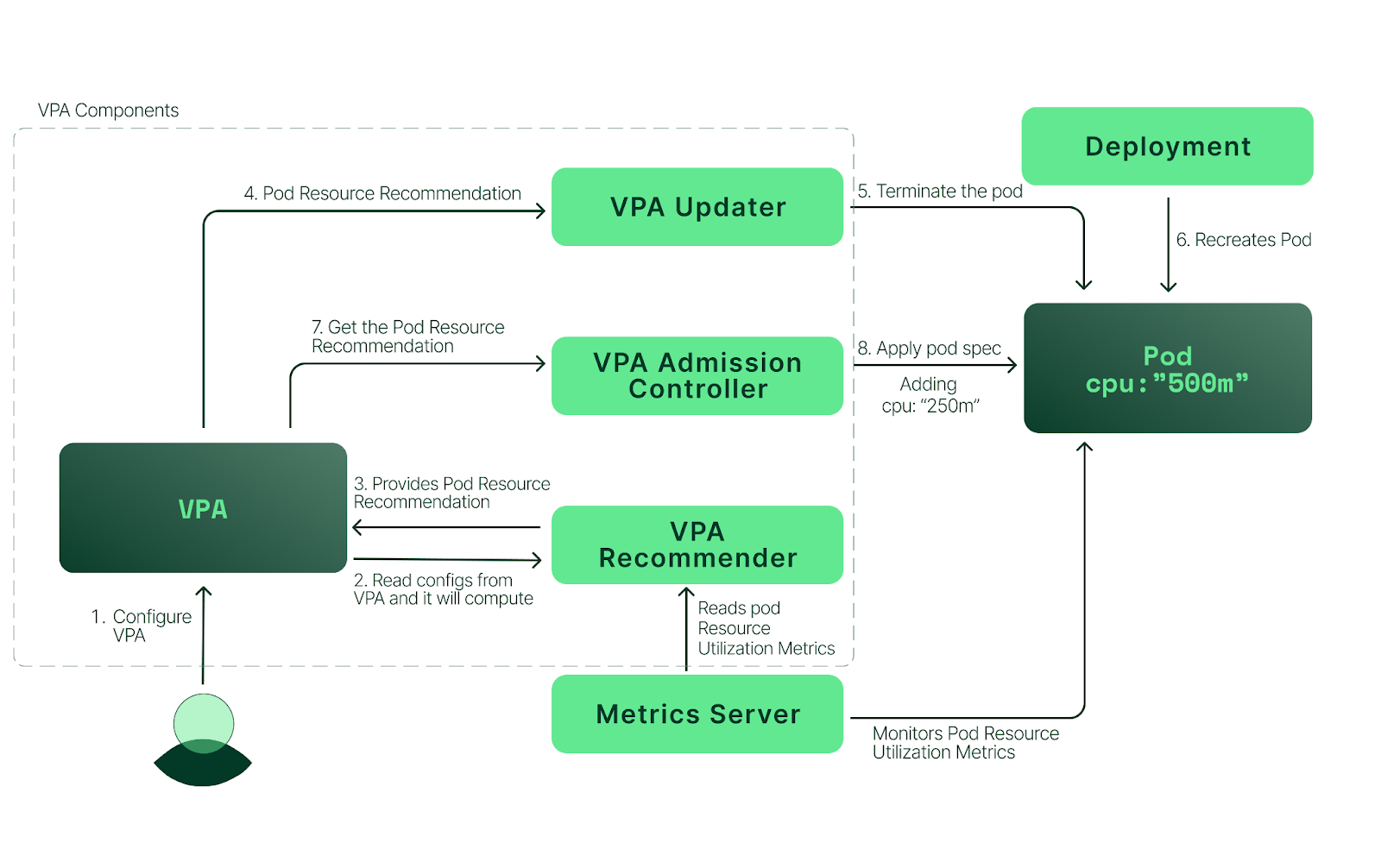

VPA组成部分

- VPA Recommender

- 监视资源利用率并计算目标值

- 查看指标历史记录、OOM事件。根据定义的限制请求比例提高/降低限制

- VPA Updater

- 驱逐哪些需要新资源限制的Pod

updateMode:AutoPod 的创建和更新时都会修改资源请求,不同的是,只要Pod 中的请求值与新的推荐值不同,VPA 都会驱逐该 Pod,然后使用新的推荐值重新启一个。因此,一般不使用该策略,而是使用 Auto,除非你真的需要保证请求值是最新的推荐值

- VPA Admission Controller

- 当VPA更新程序驱逐并重新启动Pod时,在新pod启动之前更改CPU和内存设置

- 当updateMode:Auto时,如果需要更改pod的资源请求,则驱逐pod,修改正在运行的Pod资源唯一的方法就是重建Pod

VPA工作模式

- 首先创建好deployment以及VPA对象

- Recommender发现有VPA存在,会通过metrics 获取所有VPA绑定Pod的CPU/内存的当前使用值,然后结合历史数据(VPA维护维护的crd对象)给当前VPA所有容器推荐值 (并且会将当前的数据当做历史数据保留)

- Updater组件负责监听VPA资源,一旦VPA有推荐值,就会判断当前推荐值是否需要绑定到新的Pod上

资源推荐值和当前Pod正在使用的值差距是否过大,如果过大则更新,差距不大则忽略。并且Updater更新逻辑非常简单,就是直接驱逐该Pod

- Adminission Controller组件负责Pod重建,一旦有Pod重建,并且该Pod会受到VPA控制,将Pod资源调整为VPA推荐值

VPA优缺点

• 监控数据使用metrics Server获取

• 针对同一个部署组,不能同时启用hpa和vpa,除非hpa只监控定制化的或者外部的资源度量

• vpa更新pod的resouces时,会导致pod的重建和重启,甚至是重调度

• VPA使用admission webhook作为其准入控制器。如果集群中有其他的admission webhook,需要确保它们不会与VPA发生冲突

• VPA会处理绝大多数OOM(Out Of Memory)的事件,但不保证所有的场景下都有效。

• VPA的性能还没有在大型集群中测试过。

• VPA对Pod资源requests的修改值可能超过实际的资源上限,例如节点资源上限、空闲资源或资源配额,从而造成Pod处于Pending状态无法被调度。同时使用集群自动伸缩(ClusterAutoscaler)可以一定程度上解决这个问题。

• 多个VPA同时匹配同一个Pod会造成未定义的行为。

• vpa目前只支持kubernetes的默认控制器,并不支持扩展控制器

VPA四种访问模式

我们还需要了解一下vpa 四种运行模式,通过updateMode 指定

- Auto: 默认策略,在 Pod 创建时修改资源请求,并且在 Pod 更新时也会修改。

VPA的此功能是试验性的,可能造成web down机

- Recreate: 类似 Auto,在 Pod 的创建和更新时都会修改资源请求,不同的是,只要Pod 中的请求值与新的推荐值不同,VPA 都会驱逐该 Pod,然后使用新的推荐值重新启一个。因此,一般不使用该策略,而是使用 Auto,除非你真的需要保证请求值是最新的推荐值。

VPA的此功能是试验性的,可能造成web down机

- Initial: 仅在 Pod 创建时修改资源请求,以后都不再修改。

- Off: 不改变 Pod 的资源请求,不过仍然会在 VPA 中设置资源的推荐值。

官方文档VPA格式如下

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: my-app-vpa

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: my-app

updatePolicy:

updateMode: "Auto"

部署VPA

目前阿里云ACK已经支持VPA,如果使用的是阿里云ACK可以直接参考阿里云VPA

https://help.aliyun.com/document_detail/173702.html

本次环境采用Kubernetes 1.23.5进行部署

VPA依赖metrics,所以在部署VPA服务前,需要先配置metrics Server

mkdir /root/metrics-server

cd /root/metrics-server

cat > metrics-server.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- nodes/metrics

verbs:

- get

- apiGroups:

- ""

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

image: registry.cn-beijing.aliyuncs.com/abcdocker/metrics-server:v0.6.1

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

EOF

创建metrics Server

[root@k8s-01 metrics-server]# kubectl apply -f metrics-server.yaml

serviceaccount/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

service/metrics-server created

deployment.apps/metrics-server created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

检查服务是否正常

[root@k8s-01 metrics-server]# kubectl get pod -n kube-system |grep metrics

metrics-server-7dbf488976-2m7sc 1/1 Running 0 92s

等一分钟后,我们就可以测试metrics是否获取到数据

[root@k8s-01 metrics-server]# kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-01 227m 2% 2508Mi 32%

k8s-02 175m 4% 1937Mi 24%

k8s-03 192m 3% 2183Mi 27%

k8s-04 52m 1% 983Mi 25%

k8s-05 80m 2% 1713Mi 22%

[root@k8s-01 metrics-server]# kubectl top pod -n kube-system

NAME CPU(cores) MEMORY(bytes)

coredns-64897985d-kbm2r 3m 37Mi

coredns-64897985d-rshlt 2m 34Mi

etcd-k8s-01 44m 142Mi

etcd-k8s-02 43m 159Mi

etcd-k8s-03 44m 119Mi

kube-apiserver-k8s-01 64m 697Mi

kube-apiserver-k8s-02 53m 590Mi

kube-apiserver-k8s-03 61m 532Mi

kube-controller-manager-k8s-01 2m 29Mi

kube-controller-manager-k8s-02 19m 77Mi

kube-controller-manager-k8s-03 2m 30Mi

kube-flannel-ds-dmqqc 2m 27Mi

kube-flannel-ds-hhd4r 2m 19Mi

kube-flannel-ds-hw8x9 3m 25Mi

kube-flannel-ds-n2zgv 2m 25Mi

kube-flannel-ds-qrbz6 2m 26Mi

kube-proxy-29zp6 5m 28Mi

kube-proxy-7g6lr 6m 29Mi

kube-proxy-ghh8b 6m 21Mi

kube-proxy-tmhnv 4m 28Mi

kube-proxy-xlnc2 1m 30Mi

kube-scheduler-k8s-01 5m 32Mi

kube-scheduler-k8s-02 3m 29Mi

kube-scheduler-k8s-03 3m 29Mi

metrics-server-7dbf488976-2m7sc 5m 26Mi

接下来我们部署VPA

vertical-pod-autoscaler:autoscaler下面的一个子项目

项目地址:https://github.com/kubernetes/autoscaler/tree/master/vertical-pod-autoscaler

版本兼容 (文章演示版本为Vertical Pod Autoscaler 0.12.0)

| VPA 版本 | Kubernetes 版本 | |-----------|---------------| | 0.12 | 1.23+ | | 0.11 | 1.22+ | | 0.10 | 1.22+ | | 0.9 | 1.16+ | | 0.8 | 1.13+ | | 0.4 至 0.7 | 1.11+ | | 0.3.X 及以下 | 1.7+ |

注意:vpa-up.sh 脚本会读取当前的环境变量:$REGISTRY 和 $TAG,分别是镜像仓库地址和镜像版本,默认分别是 k8s.gcr.io和 0.12.0。由于网络的原因,我们无法拉取k8s.gcr.io的镜像,因此建议修改 $REGISTRY为国内可访问的镜像仓库地址,或者使用我的文件

执行升级脚本前,请务必升级openssl,参考下面文章升级

使用我的包会将镜像和相关配置修改完毕

链接:https://caiyun.139.com/m/i?165CjuzoXP77q 提取码:Fmd6

$ unzip autoscaler-master.zip

$ cd autoscaler-master/vertical-pod-autoscaler

#执行升级脚本,卸载脚本为./hack/vpa-down.sh && 不可以直接执行deployment,需要脚本来为我们创建vpa-tls-secret

[root@k8s-01 vertical-pod-autoscaler]# ./hack/vpa-up.sh

customresourcedefinition.apiextensions.k8s.io/verticalpodautoscalercheckpoints.autoscaling.k8s.io created

customresourcedefinition.apiextensions.k8s.io/verticalpodautoscalers.autoscaling.k8s.io created

clusterrole.rbac.authorization.k8s.io/system:metrics-reader created

clusterrole.rbac.authorization.k8s.io/system:vpa-actor created

clusterrole.rbac.authorization.k8s.io/system:vpa-checkpoint-actor created

clusterrole.rbac.authorization.k8s.io/system:evictioner created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-actor created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-checkpoint-actor created

clusterrole.rbac.authorization.k8s.io/system:vpa-target-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-target-reader-binding created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-evictionter-binding created

serviceaccount/vpa-admission-controller created

clusterrole.rbac.authorization.k8s.io/system:vpa-admission-controller created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-admission-controller created

clusterrole.rbac.authorization.k8s.io/system:vpa-status-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-status-reader-binding created

serviceaccount/vpa-updater created

deployment.apps/vpa-updater created

serviceaccount/vpa-recommender created

deployment.apps/vpa-recommender created

Generating certs for the VPA Admission Controller in /tmp/vpa-certs.

Generating RSA private key, 2048 bit long modulus (2 primes)

..........................+++++

..................................................+++++

e is 65537 (0x010001)

Generating RSA private key, 2048 bit long modulus (2 primes)

.....................+++++

.................................................................+++++

e is 65537 (0x010001)

Signature ok

subject=CN = vpa-webhook.kube-system.svc

Getting CA Private Key

Uploading certs to the cluster.

secret/vpa-tls-certs created

Deleting /tmp/vpa-certs.

deployment.apps/vpa-admission-controller created

service/vpa-webhook created

检查服务启动状态

[root@k8s-01 vpa]# kubectl get pod -n kube-system |grep vpa

vpa-admission-controller-657c6587bf-d5h2g 1/1 Running 0 2m48s

vpa-recommender-5874cd9fdb-ldpdp 1/1 Running 0 2m49s

vpa-updater-5d4c88f799-4kj4x 1/1 Running 0 2m50s

创建Nginx 测试文件

需要创建一个deployment测试文件,我这里使用nginx为例

这里我创建了一个deployment 名字为nginx,svc名字为nginx的容器。并且添加了资源限制

cat<<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx:alpine

name: nginx

resources:

requests:

cpu: 100m

memory: 250Mi

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx

spec:

selector:

app: nginx

type: NodePort

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 30001

EOF

通过下面的命令,我们可以看到deployment信息

[root@k8s-01 ~]# kubectl get svc #svc名称为nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 95d

nginx NodePort 10.106.137.150 <none> 80:30001/TCP 35s

[root@k8s-01 ~]# kubectl get pod #pod名称为nginx开头

NAME READY STATUS RESTARTS AGE

nginx-8456c6666c-b2j7d 1/1 Running 0 37s

[root@k8s-01 ~]# kubectl get deployments.apps #一个nginx的deployment文件

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 1/1 1 1 44s

并且我们绑定容器的request资源为cpu 0.1,内存250Mi

检查svc ip是否可以正常访问

[root@k8s-01 ~]# curl 10.106.137.150 -I

HTTP/1.1 200 OK

Server: nginx/1.23.1

Date: Thu, 22 Sep 2022 12:44:11 GMT

Content-Type: text/html

Content-Length: 615

Last-Modified: Tue, 19 Jul 2022 15:23:19 GMT

Connection: keep-alive

ETag: "62d6cc67-267"

Accept-Ranges: bytes

创建VPA资源

接下来我们需要创建一个VPA资源,绑定到nginx deployment上

apiVersion: autoscaling.k8s.io/v1beta2

kind: VerticalPodAutoscaler

metadata:

name: nginx-vpa

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: nginx

updatePolicy:

updateMode: "Auto"

resourcePolicy:

containerPolicies:

- containerName: "nginx"

minAllowed:

cpu: "250m"

memory: "100Mi"

maxAllowed:

cpu: "2000m"

memory: "2048Mi"

- updateMode vpa默认访问模式

- name deployment名称

- containerName 容器名称

- minAllowed 最小允许值

- maxAllowed 最大允许值

创建vpa资源对象

[root@k8s-01 vpa]# kubectl apply -f nginx-vpa.yaml

verticalpodautoscaler.autoscaling.k8s.io/nginx-vpa created

我们通过get可以看到 (如果没有数据,等待几秒即可)

[root@k8s-01 vpa]# kubectl get vpa

NAME MODE CPU MEM PROVIDED AGE

nginx-vpa Auto 250m 262144k True 72s

可以通过describe查看到详细信息

[root@k8s-01 vpa]# kubectl describe vpa nginx-vpa |tail -n 20

Conditions:

Last Transition Time: 2022-09-22T13:01:20Z

Status: True

Type: RecommendationProvided

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 250m

Memory: 262144k

Uncapped Target:

Cpu: 25m

Memory: 262144k

Upper Bound:

Cpu: 765m

Memory: 800697776

Events: <none>

关键策略有如下:

- Lower Bound 最小值

- Target 推荐值

- Uncapped Target 上限值

- Upper Bound 目标利用率

测试VPA

接下来,我们对nginx容器进行压测,测试describe中vpa是否发生变化

[root@k8s-01 vpa]# ab -c 1000 -n 1000000000 http://10.106.137.150/index.html #压测命令,后面的地址为svc ip

查看vpa pod是否发生变化

[root@k8s-01 vpa]# kubectl get vpa #执行前数据

NAME MODE CPU MEM PROVIDED AGE

nginx-vpa Auto 250m 262144k True 9m55s

[root@k8s-01 vpa]# kubectl get vpa #执行后

NAME MODE CPU MEM PROVIDED AGE

nginx-vpa Auto 920m 262144k True 13m

我们可以观察到,此时的vpa nginx已经提示扩容

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 1554m

Memory: 262144k

Uncapped Target:

Cpu: 1554m

Memory: 262144k

Upper Bound:

Cpu: 2

Memory: 484642857

Events: <none>

[root@k8s-01 vpa]# kubectl describe vpa nginx-vpa

由于使用的是Auto: 默认策略,仅在 Pod 创建时修改资源请求,并且在 Pod 更新时也会修改。

我们可以更新版本测试下

[root@k8s-01 ~]# kubectl get deployments.apps

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 1/1 1 1 137m

扩容deployment

[root@k8s-01 ~]# kubectl scale --replicas=3 deployment nginx

deployment.apps/nginx scaled

[root@k8s-01 ~]# kubectl get deployments.apps

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 2/3 3 2 156m

再次查看vpa nginx资源情况

这里可以看到数值已经发生变化

Status:

Conditions:

Last Transition Time: 2022-09-22T13:01:20Z

Status: True

Type: RecommendationProvided

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 1643m

Memory: 262144k

Uncapped Target:

Cpu: 1643m

Memory: 262144k

Upper Bound:

Cpu: 2

Memory: 262144k

Events: <none>

[root@k8s-01 ~]# kubectl describe vpa nginx

查看events就可以看到pod更新信息

[root@k8s-01 ~]# kubectl get event|grep EvictedByVPA

59m Normal EvictedByVPA pod/nginx-8456c6666c-b2j7d Pod was evicted by VPA Updater to apply resource recommendation.

我们在查看pod request

Requests:

cpu: 1643m

memory: 262144k

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-n9chm (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-n9chm:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events: <none>

[root@k8s-01 ~]# kubectl describe pod nginx-8456c6666c-pb5nt

这里就会发现pod自动为我们更新容器了,并设置nginx容器cpu为1643m,内存为262144k

51工具盒子

51工具盒子